I have got Hadoop running on Windows 2012 and Ubuntu successfully and easily following the instruction online. However I have struggled getting it running correctly on iMac. So here is the correct way to set it up on iMac with MacOS Sierra as I could not find any online reliable instructions. Hope this helps

-

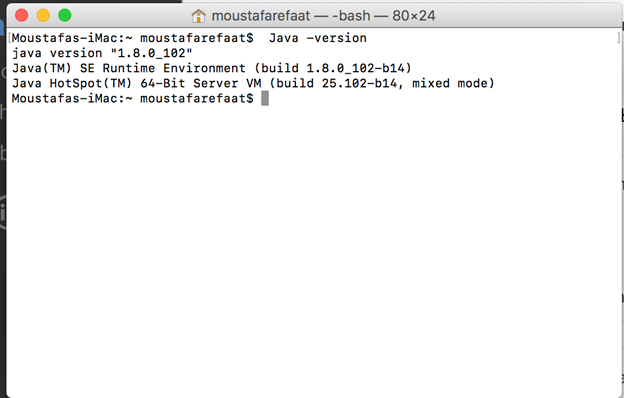

Verify that you have Java installed on your machine

-

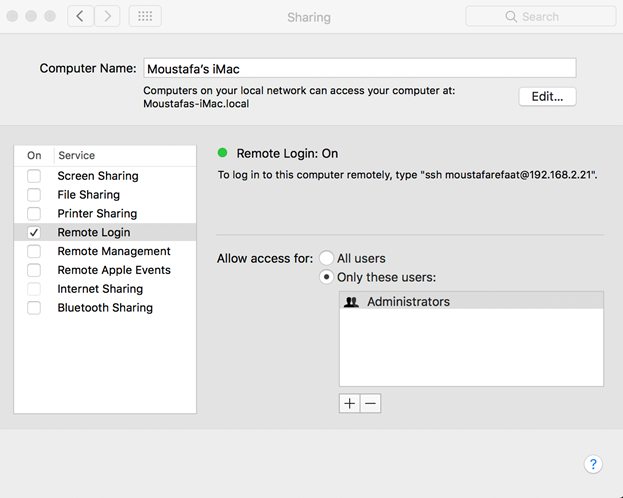

From system Prefernce ->sharing enable “Remote Login”

- Excute the following command

- Excute the following command

$ ssh localhost

The authenticity of host ‘localhost (::1)’ can’t be established.

ECDSA key fingerprint is

- If the above is printed execute

$ ssh-keygen -t dsa -P ” -f ~/.ssh/id_dsa

Generating public/private dsa key pair.

Your identification has been saved in /Users/moustafarefaat/.ssh/id_dsa.

Your public key has been saved in /Users/moustafarefaat/.ssh/id_dsa.pub.

The key fingerprint is:

SHA256:GhGErnXItqkupPq/BorbfKQge2aPWZTXowlCW1ADkRc moustafarefaat@Moustafas-iMac.local

The key’s randomart image is:

+—[DSA 1024]—-+

| +=E+o |

| ..o. . |

| .+… |

| . o*..o |

| o+++o S |

|o.oo+o = . |

|=+ =. + |

|*o*+o |

|+OB=+. |

+—-[SHA256]—–+

. cat ~/.ssh/id_dsa.pub >> ~/.ssh/authorized_keys

-

/usr/libexec/java_home

/Library/Java/JavaVirtualMachines/jdk1.8.0_102.jdk/Contents/Home

-

Download Hadoop from

http://apache.mirror.rafal.ca/hadoop/common/

I have copied the downloaded directory to Hadoop on my machine and moved it to the users/shared/Hadoop folder

in the distribution, edit the file etc/hadoop/hadoop-env.sh to define some parameters as follows

# or more contributor license agreements. See the NOTICE file

# distributed with this work for additional information

# regarding copyright ownership. The ASF licenses this file

# to you under the Apache License, Version 2.0 (the

# “License”); you may not use this file except in compliance

# with the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an “AS IS” BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

# Set Hadoop-specific environment variables here.

# The only required environment variable is JAVA_HOME. All others are

# optional. When running a distributed configuration it is best to

# set JAVA_HOME in this file, so that it is correctly defined on

# remote nodes.

# The java implementation to use.

export

JAVA_HOME=/Library/Java/JavaVirtualMachines/jdk1.8.0_102.jdk/Contents/Home

#Moustafa

export HADOOP_HOME=/users/shared/hadoop

export HADOOP_COMMON_LIB_NATIVE_DIR=$HADOOP_HOME/lib/native

export HADOOP_OPTS=“-Djava.library.path=$HADOOP_HOME/lib/nativeenv”

# The jsvc implementation to use. Jsvc is required to run secure datanodes

# that bind to privileged ports to provide authentication of data transfer

# protocol. Jsvc is not required if SASL is configured for authentication of

# data transfer protocol using non-privileged ports.

#export JSVC_HOME=${JSVC_HOME}

export HADOOP_CONF_DIR=$HADOOP_HOME/etc/hadoop

# Extra Java CLASSPATH elements. Automatically insert capacity-scheduler.

for f in $HADOOP_HOME/contrib/capacity-scheduler/*.jar; do

if [ “$HADOOP_CLASSPATH” ]; then

export HADOOP_CLASSPATH=$HADOOP_CLASSPATH:$f

else

export HADOOP_CLASSPATH=$f

fi

done

# The maximum amount of heap to use, in MB. Default is 1000.

#export HADOOP_HEAPSIZE= 2000

#export HADOOP_NAMENODE_INIT_HEAPSIZE=””

# Extra Java runtime options. Empty by default.

export HADOOP_OPTS=“$HADOOP_OPTS -Djava.net.preferIPv4Stack=true”

# Command specific options appended to HADOOP_OPTS when specified

export HADOOP_NAMENODE_OPTS=“-Dhadoop.security.logger=${HADOOP_SECURITY_LOGGER:-INFO,RFAS} -Dhdfs.audit.logger=${HDFS_AUDIT_LOGGER:-INFO,NullAppender} $HADOOP_NAMENODE_OPTS”

export HADOOP_DATANODE_OPTS=“-Dhadoop.security.logger=ERROR,RFAS $HADOOP_DATANODE_OPTS”

export HADOOP_SECONDARYNAMENODE_OPTS=“-Dhadoop.security.logger=${HADOOP_SECURITY_LOGGER:-INFO,RFAS} -Dhdfs.audit.logger=${HDFS_AUDIT_LOGGER:-INFO,NullAppender} $HADOOP_SECONDARYNAMENODE_OPTS”

export HADOOP_NFS3_OPTS=“$HADOOP_NFS3_OPTS”

export HADOOP_PORTMAP_OPTS=“-Xmx512m $HADOOP_PORTMAP_OPTS”

# The following applies to multiple commands (fs, dfs, fsck, distcp etc)

export HADOOP_CLIENT_OPTS=“-Xmx512m $HADOOP_CLIENT_OPTS”

#HADOOP_JAVA_PLATFORM_OPTS=”-XX:-UsePerfData $HADOOP_JAVA_PLATFORM_OPTS”

# On secure datanodes, user to run the datanode as after dropping privileges.

# This **MUST** be uncommented to enable secure HDFS if using privileged ports

# to provide authentication of data transfer protocol. This **MUST NOT** be

# defined if SASL is configured for authentication of data transfer protocol

# using non-privileged ports.

export HADOOP_SECURE_DN_USER=${HADOOP_SECURE_DN_USER}

# Where log files are stored. $HADOOP_HOME/logs by default.

#export HADOOP_LOG_DIR=${HADOOP_LOG_DIR}/$USER

# Where log files are stored in the secure data environment.

export HADOOP_SECURE_DN_LOG_DIR=${HADOOP_LOG_DIR}/${HADOOP_HDFS_USER}

###

# HDFS Mover specific parameters

###

# Specify the JVM options to be used when starting the HDFS Mover.

# These options will be appended to the options specified as HADOOP_OPTS

# and therefore may override any similar flags set in HADOOP_OPTS

#

# export HADOOP_MOVER_OPTS=””

###

# Advanced Users Only!

###

# The directory where pid files are stored. /tmp by default.

# NOTE: this should be set to a directory that can only be written to by

# the user that will run the hadoop daemons. Otherwise there is the

# potential for a symlink attack.

export HADOOP_PID_DIR=${HADOOP_PID_DIR}

export HADOOP_SECURE_DN_PID_DIR=${HADOOP_PID_DIR}

# A string representing this instance of hadoop. $USER by default.

export HADOOP_IDENT_STRING=$USER

Edit coresite.xml to set the fa.defaultfs location to local host. While many websites recommend using port 9000 for some reason that port was used on my iMac so I used 7500 instead

<?xml version=“1.0” encoding=“UTF-8”?>

<?xml-stylesheet type=“text/xsl” href=“configuration.xsl”?>

<!–

Licensed under the Apache License, Version 2.0 (the “License”);

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an “AS IS” BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

–>

<!– Put site-specific property overrides in this file. –>

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://localhost:7500</value>

</property>

</configuration>

create folder data in users\shared\hadoop and edit the file hdfs-site.xml as follows. Otherwise Hadoop will create the files in the /tmp folder

<?xml version=“1.0” encoding=“UTF-8”?>

<?xml-stylesheet type=“text/xsl” href=“configuration.xsl”?>

<!–

Licensed under the Apache License, Version 2.0 (the “License”);

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an “AS IS” BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

–>

<!– Put site-specific property overrides in this file. –>

<configuration>

<property>

<name>dfs.namenode.name.dir</name>

<value>/Users/Shared/hadoop/data/hadoop-${user.name}</value>

<description>A base for other temporary directories.</description>

</property>

</configuration>

next edit the file yarn-site.xml as follows

?xml version=“1.0”?>

<!–

Licensed under the Apache License, Version 2.0 (the “License”);

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an “AS IS” BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

–>

<configuration>

<!– Site specific YARN configuration properties –>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

</configuration>

Now you are ready to start from a terminal window run the command

. /users/shared/hadoop/etc/hadoop/hadoop-env.sh

notice the dot then space first this ensures the script will run in the current session. If you run the command printenv you should see all the variables set up correctly

now run the command to format the node (notice you get the

$HADOOP_HOME/bin/hdfs namenode -format

2016-11-09 16:19:18,802 INFO [main] namenode.NameNode (LogAdapter.java:info(47)) – STARTUP_MSG:

/************************************************************

STARTUP_MSG: Starting NameNode

STARTUP_MSG: host = moustafas-imac.local/192.168.2.21

STARTUP_MSG: args = [-format]

STARTUP_MSG: version = 2.7.3

STARTUP_MSG: classpath = …

STARTUP_MSG: build = https://git-wip-us.apache.org/repos/asf/hadoop.git -r baa91f7c6bc9cb92be5982de4719c1c8af91ccff; compiled by ‘root’ on 2016-08-18T01:41Z

STARTUP_MSG: java = 1.8.0_102

/************************************************************

SHUTDOWN_MSG: Shutting down NameNode at moustafas-imac.local/192.168.2.21

************************************************************/

open hosts file in /private/etc (note you will have to save the edited file to documents and then copy it with administrative privileges to add your Hadoop machine name

##

# Host Database

#

# localhost is used to configure the loopback interface

# when the system is booting. Do not change this entry.

##

127.0.0.1 localhost

255.255.255.255 broadcasthost

127.0.1.1 moustafas-imac.local

::1 localhost

now you are ready to start HDFS run the command

$HADOOP_HOME/sbin/start-dfs.sh

and to start YARN run

$HADOOP_HOME/sbin/start-yarn.sh

To test our setup run the following commands

$HADOOP_HOME/bin/hadoop fs -mkdir /user

Moustafas-iMac:hadoop moustafarefaat$ $HADOOP_HOME/bin/hadoop fs -mkdir /user/moustafarefaat

Moustafas-iMac:hadoop moustafarefaat$ $HADOOP_HOME/bin/hadoop fs -mkdir /user/moustafarefaat/input

$HADOOP_HOME/bin/hadoop fs -put $HADOOP_HOME/etc/hadoop/*.* /user/moustafarefaat/input

$HADOOP_HOME/bin/hadoop jar $HADOOP_HOME/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.3.jar grep input output ‘dfs[a-z.]+’

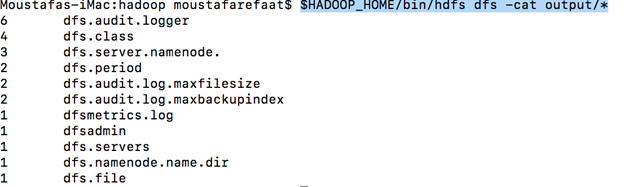

$HADOOP_HOME/bin/hdfs dfs -cat output/*

you should see results like below

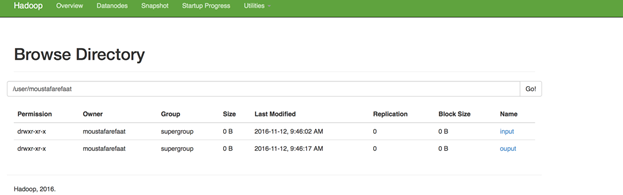

if you browse to http://localhost:50070/explorer.html#/user/moustafarefaat

and if you browse to http://localhost:19888/jobhistory/app

you should see

Now your Hadoop on iMac is ready for use.

Hope this helps